Today, businesses need infrastructure that’s not just strong, but also super flexible and able to grow fast. Trying to manage all that by hand just won’t cut it anymore. That’s why Infrastructure as Code, or IaC, has become such a game-changer. It’s totally changed how we build and manage our digital foundations.

IaC means you define and manage your infrastructure using code, bringing all those great software development practices into how you run things. And when it comes to IaC tools, Terraform is a real standout. It lets you define and set up infrastructure across all sorts of cloud providers with surprising ease. Now, IaC and Terraform are powerful on their own, but if you really want to make things scalable and easy to manage in big, complex setups, there’s one key idea: modular design. This guide will show you how modular Terraform helps you build infrastructure that’s not just automated, but truly scalable, consistent, and simple to keep up with.

What is Infrastructure as Code (IaC) and Why is it Indispensable?

Infrastructure as Code (IaC) is a huge shift in how we handle digital infrastructure. It’s all about managing and setting up your infrastructure using configuration files that computers can read, instead of doing everything by hand, which often leads to mistakes. Basically, IaC treats infrastructure parts like networks, virtual machines, and databases with the same care and methods you’d use for regular software code. This way, you automate setting up, deploying, configuring, and managing your infrastructure, from physical hardware to virtual machines and cloud stuff.

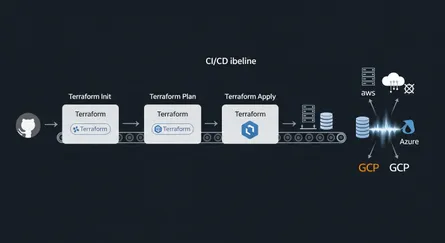

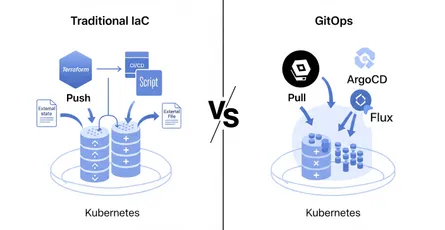

The main ideas behind IaC are what make it so effective. Automation is a huge one; it gets rid of all those manual steps in setting up, deploying, configuring, and managing your infrastructure. This automation is key for agile development, continuous integration/continuous delivery (CI/CD), and modern DevOps ways of working. It’s not just about being fast, either. This automation directly makes businesses more agile. When you can set up and manage infrastructure quickly, you can develop, test, and deploy applications much faster. This speeds up the whole software development process, letting companies react to market changes, release new features, and grow operations way quicker than old manual methods ever would. That really helps with staying competitive and making money.

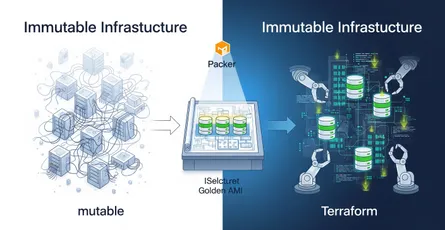

Consistency and repeatability are super important too. Because you’re using code, IaC really cuts down on configuration errors and gets rid of those inconsistencies that pop up when different people are doing manual setups. This means if you apply the same configuration over and over, you’ll always get the exact same result, no matter what the system looked like before that’s called idempotency. This helps different teams set up consistent and repeatable ways to manage infrastructure. Plus, IaC encourages immutability. That means infrastructure is set up as resources that can’t be changed. If you need a change, you create brand new infrastructure and swap it out, instead of tweaking the old stuff. This makes it easier to roll back quickly and keeps your environments really consistent. Finally, just like any other code, IaC configurations work great with version control. This gives you full history of changes and helps keep track of who did what to the infrastructure.

The advantages you get from IaC are huge and really make a difference. Speed is a big one; setting up infrastructure with code scripts is way faster than doing it by hand, so you get resources ready quickly. Accuracy is another major plus. Code-driven setups drastically cut down on human errors and inconsistencies. Version control also boosts accountability and transparency, giving you a full history of changes, which is great for audits and compliance. IaC also makes things more efficient, making software development smoother, using resources better, and letting IT teams focus on more important work. These efficiencies often mean big cost savings, because automation minimizes mistakes and gets rid of time-consuming manual work, helping you save on hardware, staff, and storage. And IaC naturally supports scalability, letting companies easily grow their infrastructure without spending too much, since automation reduces those misconfigurations and manual tasks that usually slow things down.

Now, people often praise IaC for cutting down on security risks by reducing human error which, by the way, causes most cyberattacks. But its ideas of consistency and reproducibility actually help reduce risks across all your operations, not just security. Consistent, repeatable processes mean less configuration drift and fewer surprises in your CI/CD pipelines and testing. This consistency helps with all sorts of operational risks, like unexpected downtime, slow performance, and tricky debugging issues. It makes sure your environments act predictably, which is super important for overall system reliability and smooth disaster recovery. So, it’s not just about security; it’s about making your whole infrastructure stable and resilient.

How Does Terraform Empower Your IaC Strategy?

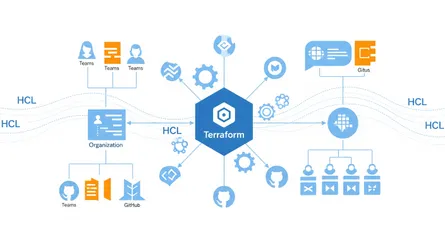

Terraform has really become a core part of the Infrastructure as Code world. It’s an open-source IaC tool from HashiCorp, written in Golang. Most folks see it as the go-to standard for managing cloud resources. Terraform lets engineers define their infrastructure in code, then set up, manage, and deploy those resources across different cloud providers like AWS, Azure, and Google Cloud (GCP) all with one tool. And it’s not just for cloud environments; Terraform can also automate things with Kubernetes, Helm, and even custom providers, showing just how flexible it is.

One key thing about Terraform is that it uses a declarative programming language called HashiCorp Configuration Language, or HCL. With declarative syntax, you tell Terraform what you want your infrastructure to look like in the end, and it smartly figures out the best way to get and keep it that way. This is pretty different from imperative ways of working, where you have to spell out every single step and the order it needs to happen in. HCL is a Domain Specific Language (DSL), which means it’s designed for this specific job. That makes it easier and faster for developers to get productive with just a little bit of syntax learning, because it hides away the complicated stuff you’d find in general programming languages.

Terraform’s declarative style, along with how simple HCL is, makes it much easier to manage complex, spread-out systems at scale. Since you’re focusing on what your infrastructure should be, not how to build it step-by-step, engineers can let Terraform handle all the tricky dependency mapping and execution order. This really lightens the load on engineers, letting them focus on designing the architecture instead of tiny operational details. This simplification is vital for dealing with the natural complexity of big cloud environments, where trying to manage dependencies by hand would lead to tons of errors and take forever. The outcome? Faster development cycles and fewer deployment failures caused by wrong sequencing.

Terraform works thanks to a few key parts:

- Providers are like plugins that let Terraform talk to specific infrastructure resources, like AWS or Azure Resource Manager. They’re the bridge, turning your Terraform setups into the right API calls.

- Resources are the actual infrastructure pieces Terraform manages, things like virtual machines, networks, and databases. They’re the basic building blocks of any Terraform setup.

- Variables work a lot like variables in regular programming. You use them to organize your code, make it easier to change how things behave, and help with reuse. Variables can be different types, like text, numbers, true/false, lists, and maps.

- Outputs show you values from data sources, resources, or variables after Terraform runs, or they can expose details from a module for use somewhere else.

- Data Sources pull in information from outside places, like existing infrastructure. This lets you use that info in new resources or local variables.

You really can’t overstate how important Terraform’s state management is. Terraform is a stateful tool, meaning it carefully keeps track of your infrastructure’s current status by comparing your IaC setup with a state file it creates after every run. This file, usually called terraform.tfstate, is a detailed JSON structure that lists all the resources it manages and any changes it plans to make. While new users might start with a local state file that’s a big no-no for teams because it can easily lead to conflicts and configuration drift. For bigger operations, especially with lots of engineers, the state is usually split into several files and stored remotely in services like AWS S3 or Terraform Cloud. Remote state is key because it lets multiple people access it, locks the state to stop simultaneous changes, and makes things more secure with encryption.

Here’s something really important to grasp: even though state management is a core feature, it can also be a major source of complexity and risk if you don’t handle it carefully, especially when teams are working together. The state file, while vital for Terraform’s declarative way of working, is your single source of truth. If this file gets messed up, becomes old, or isn’t managed well say, because someone made manual changes outside of Terraform (what we call “ClickOps”) can cause big infrastructure problems, like accidentally deleting resources or widespread misconfigurations. This means state management isn’t just a technical detail; it’s a critical operational and security issue. It really highlights that even with all the benefits of automation, you still need strong human processes and dedicated tools around that state file to prevent major outages or data breaches.

Why Modular Design is the Cornerstone of Scalable Terraform?

Terraform modules are absolutely essential for building Infrastructure as Code that you can scale and easily maintain. Think of them as reusable, self-contained bundles of Terraform setups. These modules wrap up specific resources, like virtual machines, networks, and databases, putting all the related resources, variables, and outputs into one neat package. It’s worth remembering that Terraform sees every configuration as a module; the folder where you run terraform plan or apply is what it calls the “root module”.

Modular design offers many advantages, as you can see in the table below. It really helps with scalability and other important IaC benefits:

| Benefit | Description |

|---|---|

| Reusability | Infrastructure components can be defined once and reused across multiple projects and environments, eliminating code duplication and simplifying the codebase. |

| Consistency | Uniform configurations and reliability are ensured across all deployments and environments, minimizing errors and preventing configuration drift. |

| Maintainability | Functionality is encapsulated, centralizing updates to shared components and simplifying troubleshooting efforts. |

| Scalability | Efficient infrastructure growth is enabled by effectively managing complexity, reducing misconfigurations, and accelerating deployments at scale. |

| Collaboration | Teamwork is facilitated as members can easily share, maintain, and contribute to common infrastructure components, streamlining DevOps practices. |

| Abstraction | Complex configurations are simplified by hiding internal implementation details, allowing users to focus solely on inputs and outputs. |

| Reduced Sprawl | The proliferation of redundant configurations is combated by enforcing the “Don’t Repeat Yourself” (DRY) principle, leading to a more concise codebase. |

Modularity directly tackles the problem of configuration sprawl and makes things much more consistent across different environments. By wrapping up common infrastructure patterns into reusable blocks, modules mean you don’t have to write the same setup over and over again for different projects and environments. This stops one huge configuration file from getting messy and hard to handle as your infrastructure grows. When you need to update something, you only have to change it in that one central module. That really cuts down on the work and the chance of errors that come with making changes everywhere.

What’s more, modules make sure your configurations are standardized, guaranteeing everything looks the same across all deployments, no matter which team or project is doing the work. They help keep things separate, so changes to one part of your infrastructure don’t accidentally mess up others. Modules can also bake in your organization’s best practices, security rules, and compliance standards right into the code. This means things automatically follow your guidelines. And because you can version modules, it helps stop “configuration drift” by making sure deployments always use a known, tested version of your infrastructure.

Now, everyone knows modularity is key for following the “Don’t Repeat Yourself” (DRY) rule and keeping things consistent. But here’s a crucial point: if you use it wrong, you can actually create new technical debt and make things more complicated. We often see “module sprawl” that’s when you have too many modules doing pretty much the same thing and “overuse of wrapper modules,” where you just wrap a simple resource in its own module without really adding much value. These habits can mean more work to maintain things, inconsistent deployments, and really tangled dependency chains. So, it’s not enough to just have modules; they need to be well-designed and managed properly. Without clear rules about when to create a module and strong management practices, the very thing meant to simplify can actually make your infrastructure less scalable and harder to manage over time. This just goes to show that how your organization works and its design principles are just as important as the technical stuff itself.

Here’s another cool thing about modular design: it helps you build a strong self-service system that naturally encourages compliance. By wrapping up complex setups and giving you a simpler way to interact with them, modules make it easier for other teams to get infrastructure, even if they’re not Terraform experts. HashiCorp even has “no-code ready modules” for this. What’s really important here is that by putting best practices, security policies, and compliance standards right into these modules companies can make sure any infrastructure set up using them automatically follows all the rules. This creates a self-service setup where developers can get their own infrastructure quickly and efficiently, without needing to be security or compliance gurus. The design acts like a built-in safety net, cutting down on misconfigurations and making sure your infrastructure grows within set limits. That’s a huge win for both speed and good governance in big companies.

Building Robust Modules: Structure, Inputs, and Outputs

To build strong, easy-to-maintain Terraform modules, you’ll want to stick to a standard structure and really think about how their interfaces work. A typical new module usually has LICENSE, README.md, main.tf, variables.tf, and outputs.tf files. While you don’t have to use this exact structure, it’s highly recommended. It makes your modules easier to reuse and maintain, and helps tools like the Terraform Registry understand and show your module’s info properly.

Here’s what each file in that standard module structure is for:

| File/Directory | Purpose |

|---|---|

module-name/ | The root directory for the module, containing all its configuration files. |

main.tf | This file holds the core resources and logic that the module provisions or manages. |

variables.tf | This file declares the input variables, making the module configurable and adaptable to different scenarios. |

outputs.tf | This file defines the output values that the module exposes, allowing other configurations to consume information about the provisioned infrastructure. |

README.md | Provides comprehensive documentation on how to use the module, its purpose, inputs, and outputs. |

LICENSE | Specifies the terms under which the module is distributed, crucial for shared modules. |

providers.tf (Optional) | Specifies any required providers for the module, ensuring necessary plugins are available. |

examples/ (Optional) | Contains practical usage examples of the module, demonstrating how it can be implemented. |

tests/ (Optional) | Includes automated tests for the module, ensuring its functionality and reliability. |

Let’s look at a simple example of a Terraform module for creating an AWS S3 bucket. This module will create a bucket with versioning enabled and a specified access control list (ACL).

First, you’d create a directory for your module, say modules/s3-bucket/. Inside this directory, you’d have your main.tf, variables.tf, and outputs.tf files.

# Core logic for creating an AWS S3 bucket with versioning.resource "aws_s3_bucket" "this" { bucket = var.bucket_name acl = var.acl tags = var.tags # Allows for adding custom tags}

resource "aws_s3_bucket_versioning" "this" { bucket = aws_s3_bucket.this.id

versioning_configuration { status = var.versioning_enabled ? "Enabled" : "Suspended" }}

# Optional: S3 bucket server-side encryption configurationresource "aws_s3_bucket_server_side_encryption_configuration" "default" { bucket = aws_s3_bucket.this.id

rule { apply_server_side_encryption_by_default { sse_algorithm = "AES256" } }}# Input variables for the S3 bucket module.variable "bucket_name" { description = "The name of the S3 bucket." type = string}

variable "acl" { description = "The canned ACL to apply to the S3 bucket." type = string default = "private"}

variable "versioning_enabled" { description = "Enable or disable versioning for the S3 bucket." type = bool default = true}

variable "tags" { description = "A map of tags to add to the S3 bucket." type = map(string) default = {}}# Outputs for the S3 bucket module.output "bucket_id" { description = "The ID of the S3 bucket." value = aws_s3_bucket.this.id}

output "bucket_arn" { description = "The ARN of the S3 bucket." value = aws_s3_bucket.this.arn}

output "bucket_domain_name" { description = "The domain name of the bucket." value = aws_s3_bucket.this.bucket_domain_name}# Configure the AWS Providerprovider "aws" { region = "us-west-2" # Optional: Use shared credentials and profile for local testing shared_credentials_files = [pathexpand("~/.aws/credentials")] profile = "TF_TEST_PROFILE"}# Root module configuration

# Define environment-specific variableslocals { environment = "dev" # Can be "dev", "staging", or "prod" common_tags = { Environment = local.environment Project = "MyWebApp" ManagedBy = "Terraform" }}

# Call the S3 bucket modulemodule "my_website_bucket" { source = "./modules/s3-bucket"

bucket_name = "my-unique-bucket-${local.environment}" # Include environment in bucket name acl = "private" versioning_enabled = local.environment == "prod" ? true : false # Enable versioning only in production tags = merge(local.common_tags, { Name = "MyWebApp-Bucket-${local.environment}" })}# Outputs from the root moduleoutput "website_bucket_url" { description = "The URL of the S3 bucket." value = "https://${module.my_website_bucket.bucket_domain_name}"}# Define variables for the root module# No specific variables needed here as we are using localsIn this example, module "my_website_bucket" references the s3-bucket module you created. It passes values for bucket_name, acl, and versioning_enabled. The output "website_bucket_url" then uses an output from the module (module.my_website_bucket.bucket_id) to construct a URL, showing how modules can pass information between different parts of your infrastructure setup.

The main.tf file usually holds the main resource definitions and logic. In variables.tf, you declare your input variables, which makes the module customizable. If a variable doesn’t have a default value, it becomes a required input for anyone using your module. On the flip side, outputs.tf defines the values that the module makes available to whatever calls it. A well-thought-out, stable interface with clear variable names, specific type rules, good descriptions, and useful outputs makes a module simple to understand, use, and fit into bigger setups. A poorly defined interface can lead to mistakes and a tough learning curve for people using your module. This really highlights that designing modules isn’t just about putting resources together; it’s about creating a clear agreement for how that piece of infrastructure will be used. Thinking “API-first” for your modules is vital for better teamwork, fewer mistakes, and making sure modules truly speed up development instead of causing headaches and technical debt. The README.md file is also a big part of this “interface,” giving you important, easy-to-read documentation.

Input variables are super important because they let you customize modules without changing their actual code, which helps you reuse them across different setups. They take values passed in from the module that’s calling them. For input variables, it’s best to use names that clearly describe what they’re for, set specific types for clarity, and provide smart default values for settings that don’t change between environments. You should avoid hardcoding values inside the module; instead, use variables to keep things flexible. Output values, though, send results back to the module that called them, which can then use those values to fill in other arguments. They’re essential for showing information about resources Terraform manages and are key for modules to talk to each other, and for displaying important info after you run terraform apply. For security, any sensitive outputs, like passwords, should be clearly marked as sensitive = true so they don’t show up in plain text logs.

You can use Terraform modules in two main ways:

- Local Modules: You load these from a path right on your local computer. They’re great for organizing and wrapping up code, even for projects just one person is working on.

- Remote Modules: These come from outside sources like the Terraform Registry, version control systems (think Git), HTTP links, or private registries. Remote modules are perfect for reusing setups across lots of projects or teams, because sharing modules is a big part of reusing code and working together.

Module composition is about putting together several “building-block” modules to create a bigger, more complex system. We highly recommend keeping your module tree pretty flat, with just one level of child modules, instead of making deeply nested structures. You should define relationships between these modules using expressions, just like you’d link resources in a flat setup. This design idea, especially something called dependency inversion, directly fights the complexity that comes with deeply nested modules. It leads to infrastructure that’s more flexible and easier to maintain. Dependency inversion means a module gets its dependencies (like vpc_id, subnet_ids) as inputs from the main, or root, module, instead of trying to manage its own dependent resources internally. This makes things more flexible and lets you connect the same modules in different ways to get different results. It’s super handy when some objects might already exist in certain environments or when you’re building multi-cloud setups. This moves the job of arranging dependencies from inside the module to the configuration that’s calling it. This makes individual modules more self-contained and reusable, since they’re not tightly tied to how their dependencies are set up. The result is infrastructure code that’s more flexible and adaptable, easier to change over time, and reduces the “ripple effect” of changes, simplifying refactoring. That’s crucial for long-term scalability and keeping things running smoothly in ever-changing cloud environments.

It’s often tough to decide when to turn your code into a Terraform module versus just using a basic resource directly. Good abstraction is important, but wrapping things unnecessarily can just make them more complicated.

You should create modules when:

- You find yourself copying the same kind of resource setup over and over this helps you stick to the DRY principle.

- A resource absolutely needs specific configuration or metadata applied to it.

- A resource has strict or complex naming rules that must be followed.

- A resource relies on information, metadata, or context that the user can’t know.

- The module will make your overall configuration or its interface simpler.

- Resources share a common business purpose or requirement.

- A bunch of resources have a similar lifecycle.

- Your goal is to consistently and repeatedly set up cloud resources.

On the other hand, be careful or avoid modules when:

- You’re overusing “wrapper modules” where you just put a resource in a module without adding much real value. This can lead to huge, complex dependency chains and force new releases for tiny changes.

- A resource is always going to be used by a higher-level module; in these cases, you probably shouldn’t make it a standalone or wrapper Terraform module.

- Too many modules could make your overall Terraform setup harder to understand and keep up with.

Best Practices for Maintaining Healthy and Scalable Modular Terraform

Keeping your modular Terraform environment healthy and scalable means you’ve got to be really careful about following best practices for versioning, state management, testing, and governance.

Versioning Strategies

Good versioning is super important for keeping things stable and predictable. Terraform modules usually follow semantic versioning (MAJOR.MINOR.PATCH). The major version tells you about changes that aren’t backward-compatible, the minor version means new features that are backward-compatible, and the patch version is for bug fixes that also are backward-compatible. This system helps teams understand how big an update is and plan their upgrades carefully.

It’s really important to use version constraints (like ~> 1.2.0) in your module blocks. This helps make sure things are compatible and stops accidental problems from upgrades you haven’t tested. These constraints help Terraform pick the right versions automatically when you deploy. Also, regularly checking and updating Terraform and provider versions is vital to keep up with vendor improvements and security fixes. Automated tools like Dependabot and Renovate can really help with this. Plus, clear communication about planned upgrades, what might be affected, and testing schedules helps everyone on the team work together. For the most consistency and to avoid unexpected changes or configuration drift, sticking to strict version pinning (like version = "1.2.3") is a widely suggested practice.

State Management in Modular Setups

You can’t talk enough about how important remote state is for secure Terraform operations where teams work together. You should always use remote state because it lets multiple team members share access, locks the state to stop simultaneous changes, and makes things more secure with encryption.

Having ways to isolate state files is key to keeping risks low. We suggest using multiple state files for different parts of your infrastructure, logically separating resources. This really cuts down the “blast radius” of any mistake, meaning an error in one setup won’t mess up all your resources. Similarly, keeping separate state files for different environments (like development, staging, and production) gives you strong separation and stops accidental impacts across environments. When you’re using multiple state files, you’ll often need to refer to outputs from setups stored in different state files. You can do this using the terraform_remote_state data source. And remember, you should never store state files in source control, because they contain plain text data that might include sensitive information.

Comprehensive Testing Methodologies

Thorough testing is absolutely essential for making sure your IaC is reliable and high-quality in scalable environments. Different testing methods come with different costs, runtimes, and levels of depth:

| Testing Method | Description | Tools/Approach | Cost/Runtime | Purpose |

|---|---|---|---|---|

| Static Analysis | Examines syntax, structure, and potential misconfigurations without deploying any actual resources. | terraform validate, tflint, tfsec, Checkov | Low / Fast | Catches basic errors, security misconfigurations, and style issues early in the development cycle. |

| Unit Testing | Focuses on testing individual modules in isolation, often using mock providers to simulate infrastructure. | Terraform Test (built-in), mock providers | Low / Fast (no real infrastructure) | Verifies module logic and confirms expected outputs without provisioning actual cloud resources. |

| Module Integration Testing | Involves deploying individual modules into a dedicated test environment to verify resource creation and their intended functionality. | Terratest, Kitchen-Terraform, InSpec, Google’s blueprint testing framework | Medium / Moderate | Ensures that modules function correctly as designed when deployed in a realistic context. |

| End-to-End Testing | Extends integration testing by deploying the entire architecture (comprising multiple modules) into a fresh test environment that closely mirrors the production environment. | Terratest, custom scripts | High / Slow | Confirms that all modules work together cohesively and that the complete system behaves as expected in a near-production setting. |

Tools like terraform validate do static analysis to check your syntax and make sure your configuration is valid. Terratest, which is a Go-based framework, is widely used for integration and end-to-end testing. It’s flexible, works well with CI/CD pipelines, and saves you money because it only sets up infrastructure while the tests are running. There’s also a newer, built-in option called Terraform Test, which is an HCL-based unit testing feature available in Terraform v15+. It lets your tests live right next to your main code and uses mock providers for testing that won’t cost you anything, since you don’t need to set up actual infrastructure.

There are a few practical things to keep in mind for good testing. Tests can create, change, and destroy real infrastructure, so they can take a lot of time and cost money. To help with this, it’s smart to break your architecture into smaller modules and test each one separately. This makes tests faster, cheaper, and more reliable. Ideally, each test should be independent, so you’re not reusing state across tests. Taking a “fail fast” approach by running cheaper methods (like static analysis and unit tests) first helps you catch problems early. Always use a separate, isolated environment for testing to stop accidental deletions or changes to your development or production resources. And super important: make sure all resources are completely cleaned up after testing so you don’t get hit with unnecessary cloud bills.

Focusing on static analysis, unit testing (especially with mock providers), and policy enforcement shows a strong move towards “shifting left” quality and security checks in IaC pipelines. This means finding and fixing problems everything from syntax errors and wrong setups to security holes and policy breaches earlier in the development process, often even before you run any terraform plan or apply command. This proactive way of working really cuts down the cost of fixing bugs, speeds up development, and naturally makes your infrastructure more secure and compliant by catching issues when they’re easiest and cheapest to sort out. It’s a big change from just reacting to problems to actively making sure things are good from the start.

Governance and Security

Strong governance and security are super important for big IaC deployments. Putting clear approval steps in your workflow, especially for module or resource upgrades, is a critical way to lower the risk of accidentally breaking things. This gives you an essential extra layer of review for big changes. You should also use the prevent_destroy feature to protect against accidentally deleting resources.

Automated governance, especially with tools like Open Policy Agent (OPA), is a strong way to fight the problems of module sprawl, misconfigurations, and security weaknesses in big IaC setups. OPA can define, enforce, and check policies across your infrastructure configurations. For instance, OPA policies can make sure you only use approved module sources and registries, require strict module version pinning, demand specific modules for critical resource types, and point out when deprecated or insecure modules are being used. This automated policy enforcement gives you automatic safety rails, making sure developers stick to your defined dependency management practices and company standards. This complete governance framework directly solves the issues of module sprawl and reduces related risks by making sure things are consistent and secure right from the start.

Good security practices also mean protecting hard-coded secrets by not putting credentials directly in your code. Instead, you should use sensitive variable references or special tools for managing secrets. Regularly scanning your IaC setups for misconfigurations using static application security testing (SAST) or software composition analysis (SCA) tools is super important to find potential weaknesses that could leave your infrastructure open to attack.

Documentation

Thorough documentation is vital for keeping modular Terraform easy to maintain and use. Every module should have a clear README.md file that explains its purpose, inputs, outputs, dependencies, and how to use it. Keeping changelogs is also important to track big changes, giving module users clear info on updates. Plus, clearly documenting version requirements and constraints in your project docs makes sure team members know about compatibility needs.

Conclusion

Modular Terraform isn’t just a nice-to-have technical feature; it’s a core strategy for building strong, scalable, and easy-to-maintain Infrastructure as Code. By adopting modular design, companies can change how they manage infrastructure from a manual, mistake-prone process into something automated, consistent, and super efficient.

Your journey starts with really understanding Infrastructure as Code principles, using Terraform’s declarative power and its key parts. But the real scalability and ease of management come from well-designed modules that hide complexity, encourage reuse, and make sure things are consistent across different environments. Sticking to a structured module design, carefully defining inputs and outputs, and smartly using module composition are all vital steps here.

What’s more, keeping a healthy modular Terraform ecosystem needs ongoing work. This means putting in place strict versioning strategies, managing state files remotely and securely, using thorough testing methods (from static analysis to full end-to-end checks), and setting up strong governance frameworks. Together, these practices help reduce common problems like configuration sprawl, dependency management, and security weaknesses.

Ultimately, by sticking to modular Terraform best practices, companies can gain incredible speed, cut down on operational risks, save money, and build a resilient cloud infrastructure that can support fast innovation and growth. It’s an investment that really pays off in terms of reliability, efficiency, and the confidence to scale operations in a constantly changing digital world.

References

- Creating Modules | Terraform | HashiCorp Developer, accessed on June 6, 2025, https://developer.hashicorp.com/terraform/language/modules/develop

- Build and use a local module | Terraform | HashiCorp Developer, accessed on June 6, 2025, https://developer.hashicorp.com/terraform/tutorials/modules/module-create

- Modules overview | Terraform | HashiCorp Developer, accessed on June 6, 2025, https://developer.hashicorp.com/terraform/tutorials/modules/module

- Terraform Infrastructure as Code (IaC) Guide With Examples - Spacelift, accessed on June 6, 2025, https://spacelift.io/blog/terraform-infrastructure-as-code

- Managing Terraform State - Best Practices & Examples - Spacelift, accessed on June 6, 2025, https://spacelift.io/blog/terraform-state

- Terraform Modules: Mastering IaC Efficiency - Coherence, accessed on June 6, 2025, https://www.withcoherence.com/articles/terraform-modules-mastering-iac-efficiency

- Best practices for general style and structure | Terraform - Google Cloud, accessed on June 6, 2025, https://cloud.google.com/docs/terraform/best-practices/general-style-structure

- Best practices for testing | Terraform | Google Cloud, accessed on June 6, 2025, https://cloud.google.com/docs/terraform/best-practices/testing

- Terraform Module Versioning Best Practices - Prospera Soft, accessed on June 6, 2025, https://prosperasoft.com/blog/devops/terraform/terraform-module-versioning-best-practices/

- The Platform Engineer’s Guide to Sustainable Module Management, accessed on June 6, 2025, https://www.scalr.com/guides/platform-engineers-guide-to-sustainable-module-management

- What is Terraform & Infrastructure as Code (IaC)? - Pluralsight, accessed on June 6, 2025, https://www.pluralsight.com/resources/blog/cloud/what-is-terraform-infrastructure-as-code-iac

- Cloud infrastructure provisioning: best practices for IaC - ISE, accessed on June 6, 2025, https://devblogs.microsoft.com/ise/best-practices-infrastructure-pipelines/

- Terraform; to module, or not to module - Brendan Thompson, accessed on June 6, 2025, https://brendanthompson.com/terraform-to-module-or-not-to-module/

- Testing Terraform Code Part Two: Unit and Integration Testing, accessed on June 6, 2025, https://www.flowfactor.be/blogs/testing-terraform-code-part-two-unit-and-integration-testing/

- What Is Infrastructure as Code (IaC)? | CrowdStrike, accessed on June 6, 2025, https://www.crowdstrike.com/en-us/cybersecurity-101/cloud-security/infrastructure-as-code-iac/