AI Tool

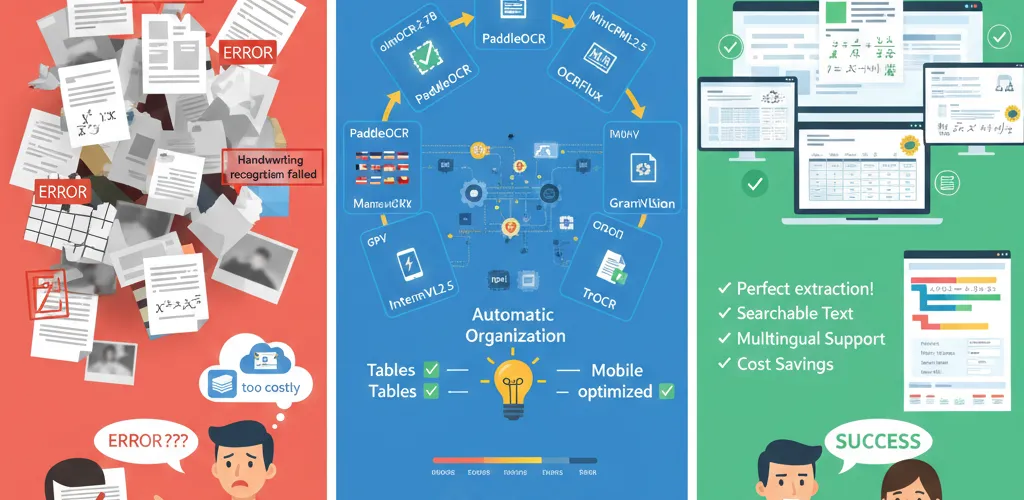

Turn your documents into perfect digital copies with these powerful open source OCR models. No more dealing with messy text extraction get clean, accurate markdown from PDFs, images, and scanned documents.

The OCR Revolution

Beyond Basic Text Extraction

Complete document understanding

These modern OCR models do way more than just read text. They understand document structure, tables, diagrams, math equations, and multiple languages, turning everything into well-formatted markdown.

Local Processing Benefits

Privacy and control

Run these models right on your own machine without sending sensitive documents to cloud services. You keep complete control over your data while getting accuracy that rivals enterprise solutions.

olmOCR-2-7B-1025

High-Performance Document OCR

Allen Institute’s flagship model

This is fine-tuned from Qwen2.5-VL-7B-Instruct using GRPO reinforcement learning, scoring 82.4 on the olmOCR-bench evaluation.

from transformers import AutoTokenizer, AutoModelForCausalLMimport torch

# Load the modelmodel_id = "allenai/olmOCR-2-7B-1025"tokenizer = AutoTokenizer.from_pretrained(model_id)model = AutoModelForCausalLM.from_pretrained(model_id, torch_dtype=torch.bfloat16, device_map="auto")

# Process documentmessages = [ {"role": "user", "content": "Convert this document to markdown"}, {"role": "user", "content": f"[IMAGE_PLACEHOLDER]"}]

inputs = tokenizer.apply_chat_template(messages, return_tensors="pt").to(model.device)outputs = model.generate(inputs, max_new_tokens=4096)result = tokenizer.decode(outputs[0], skip_special_tokens=True)Key Features

- Mathematical equations: Handles complex math expressions perfectly

- Table recognition: Keeps table structure and formatting intact

- Layout understanding: Manages multi-column documents and complex layouts

- Large-scale processing: Built for processing millions of documents

- Automated retries: Includes error handling and automatic rotation correction

PaddleOCR VL

Efficient Multilingual OCR

Ultra-compact vision-language model

Integrates NaViT visual encoder with ERNIE language model, supporting 109 languages with minimal resource usage.

import paddlefrom paddlenlp import Taskflow

# Initialize OCR pipelineocr = Taskflow("document_parsing")

# Process documentresult = ocr({"doc": "path/to/document.pdf"})print(result)Key Features

- 109 languages: Chinese, English, Japanese, Arabic, Hindi, Thai

- Complex elements: Tables, formulas, charts recognition

- High accuracy: State-of-the-art performance on OmniDocBench

- Fast inference: Optimized for real-world deployment

- Compact size: Efficient resource utilization

OCRFlux-3B

Multimodal Document Conversion

Preview release from ChatDOC

Fine-tuned from Qwen2.5-VL-3B-Instruct for clean markdown output from PDFs and images.

from transformers import Qwen2_5_VLForConditionalGeneration, AutoTokenizer, AutoProcessorfrom qwen_vl_utils import process_vision_info

# Load modelmodel = Qwen2_5_VLForConditionalGeneration.from_pretrained( "ChatDOC/OCRFlux-3B", torch_dtype=torch.bfloat16, device_map="auto")processor = AutoProcessor.from_pretrained("ChatDOC/OCRFlux-3B")

# Process imagemessages = [ { "role": "user", "content": [ {"type": "image", "image": "path/to/image.jpg"}, {"type": "text", "text": "Convert to markdown"} ] }]

text = processor.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)image_inputs, video_inputs = process_vision_info(messages)inputs = processor(text=[text], images=image_inputs, videos=video_inputs, return_tensors="pt").to(model.device)

generated_ids = model.generate(**inputs, max_new_tokens=4096)generated_text = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]Key Features

- Clean markdown: Structured output with proper formatting

- Cross-page tables: Merges table data across multiple pages

- Consumer hardware: Runs on GTX 3090 and similar GPUs

- Scalable deployment: vLLM inference support

- State-of-the-art accuracy: Superior parsing quality

MiniCPM-V 4.5

Mobile-Optimized OCR

Latest in the MiniCPM-V series

Built on Qwen3-8B and SigLIP2-400M, delivers exceptional performance for text recognition in images, documents, and videos.

from transformers import AutoModel, AutoTokenizerimport torch

# Load modelmodel = AutoModel.from_pretrained('openbmb/MiniCPM-V-4.5', trust_remote_code=True)tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-4.5', trust_remote_code=True)model.eval().cuda()

# Process imageimage = Image.open('path/to/image.jpg').convert('RGB')question = 'Convert this document to text'

msgs = [{'role': 'user', 'content': [image, question]}]result = model.chat(msgs=msgs, tokenizer=tokenizer, sampling=True, temperature=0.7)print(result)Key Features

- Mobile deployment: Optimized for edge devices

- Multi-image processing: Handles multiple images simultaneously

- Video OCR: Text recognition in video content

- State-of-the-art benchmarks: Leading performance across evaluations

- Practical efficiency: Everyday application ready

InternVL2.5-4B

Compact Multimodal Understanding

Efficient vision-language model

Combines InternViT vision encoder with Qwen2.5 language model for comprehensive OCR and understanding.

import torchfrom transformers import AutoTokenizer, AutoModel

# Load modelmodel = AutoModel.from_pretrained( 'OpenGVLab/InternVL2_5-4B', torch_dtype=torch.bfloat16, low_cpu_mem_usage=True, trust_remote_code=True).eval().cuda()

tokenizer = AutoTokenizer.from_pretrained( 'OpenGVLab/InternVL2_5-4B', trust_remote_code=True)

# Process imageimage = Image.open('path/to/image.jpg').convert('RGB')question = 'Extract all text from this image'

response = model.chat(tokenizer, image, question)print(response)Key Features

- Dynamic resolution: 448x448 pixel tile processing

- Resource efficient: Suitable for constrained environments

- Text recognition: Strong OCR performance

- Reasoning tasks: Advanced multimodal understanding

- Compact architecture: 4 billion parameters total

Granite Vision 3.3 2b

Document Understanding Specialist

IBM’s vision-language model

Built on Granite 3.1-2b-instruct with SigLIP2 vision encoder for automated content extraction.

from transformers import AutoProcessor, AutoModelForVision2Seqimport torch

# Load modelprocessor = AutoProcessor.from_pretrained("ibm-granite/granite-vision-3.3-2b")model = AutoModelForVision2Seq.from_pretrained("ibm-granite/granite-vision-3.3-2b", device_map="auto")

# Process imageimage = Image.open("path/to/document.png").convert("RGB")text_prompt = "<|start_of_text|>"

inputs = processor(text=text_prompt, images=image, return_tensors="pt").to(model.device)generated_ids = model.generate(**inputs, max_new_tokens=500)generated_text = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]Key Features

- Table extraction: Automated table content extraction

- Chart recognition: Infographics and plot understanding

- Multi-page support: Handles multi-page documents

- Image segmentation: Advanced visual processing

- Enhanced safety: Improved security features

TrOCR Large (SROIE Fine-tuned)

Specialized Text Recognition

Transformer-based OCR

Encoder-decoder architecture combining BEiT image transformer with RoBERTa text transformer.

from transformers import TrOCRProcessor, VisionEncoderDecoderModelimport torch

# Load modelprocessor = TrOCRProcessor.from_pretrained('microsoft/trocr-large-printed')model = VisionEncoderDecoderModel.from_pretrained('microsoft/trocr-large-printed')

# Process imageimage = Image.open('path/to/image.jpg').convert('RGB')pixel_values = processor(images=image, return_tensors="pt").pixel_valuesgenerated_ids = model.generate(pixel_values)

generated_text = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]print(generated_text)Key Features

- Single-line text: Optimized for printed text recognition

- High accuracy: State-of-the-art performance on benchmarks

- Transformer architecture: Modern deep learning approach

- Pre-trained models: Leverages large-scale training data

- Sequence processing: Handles text as sequential tokens

Choosing the Right Model

Use Case Considerations

Match model to needs

- High accuracy: olmOCR-2-7B-1025, OCRFlux-3B

- Efficiency: PaddleOCR VL, InternVL2.5-4B

- Multilingual: PaddleOCR VL, MiniCPM-V 4.5

- Mobile/Edge: MiniCPM-V 4.5, InternVL2.5-4B

- Specialized: TrOCR (printed text), Granite Vision (documents)

Performance Optimization

Hardware considerations

- GPU memory: Match model size to available VRAM

- Batch processing: Use models supporting multiple images

- Quantization: Consider quantized versions for efficiency

- Local deployment: All models support local inference

Implementation Best Practices

Preprocessing

Optimize input quality

# Image preprocessingdef preprocess_image(image_path): image = Image.open(image_path).convert('RGB') # Resize if needed if max(image.size) > 2240: image = image.resize((2240, 2240), Image.Resampling.LANCZOS) return imageError Handling

Robust processing

def safe_ocr_processing(model, image_path): try: image = preprocess_image(image_path) result = model.process(image) return result except Exception as e: logging.error(f"OCR processing failed: {e}") return NoneOutput Formatting

Structured results

def format_ocr_output(raw_text, confidence_scores=None): """Format OCR output with metadata""" return { "text": raw_text, "confidence": confidence_scores, "timestamp": datetime.now().isoformat(), "model_version": "olmOCR-2-7B-1025" }These open source OCR models represent the cutting edge of document processing technology. Choose based on your specific requirements accuracy, speed, language support, or hardware constraints and start converting documents with unprecedented quality.