Introduction: Why Serverless Observability is Non-Negotiable for AWS Lambda?

What is Serverless Computing and AWS Lambda?

Serverless computing is a big change in how we build things in the cloud. It takes away all the tricky parts of managing servers yourself. With this approach, your cloud provider automatically gives you the computing power you need, so you can just focus on writing your application code. You don’t have to worry about setting up servers, scaling them, keeping them running, patching them, or balancing the load, which you’d normally do with traditional server-based applications. What’s great about serverless is how fast it helps you go from an idea to a working application. You can build and get things out there really quickly.

AWS Lambda is a key serverless service from Amazon Web Services. It lets you run code when certain events happen, and you don’t have to set up or manage any servers. Since AWS fully manages this service, they handle all the underlying infrastructure, including built-in fault tolerance and security. Because it’s event-driven and you only pay for what you use, your costs stay low. You’re not paying for servers just sitting around, which can save you money compared to traditional setups where you might over-provision resources. AWS Lambda is super flexible; people use it for all sorts of things like web applications, processing data streams, and automating batch jobs.

Even though serverless promises to make things simpler by hiding the infrastructure, that very simplicity can actually make other things more complicated. When your application is made up of many small, interconnected pieces, it can feel like a black box if you don’t have good visibility. Plus, the cost savings you get from paying only for what you use depend on how efficiently you’re using things. If your functions aren’t optimized or they’re getting called unexpectedly, your bills can get surprisingly high. So, you need a deep understanding of how your application behaves across all those small, connected pieces. Good operational insight isn’t just nice to have; it’s absolutely essential if you want to get the most out of serverless.

Why is Observability Crucial for Modern Serverless Architectures?

To really get a handle on these modern, distributed systems, it’s important to know the difference between monitoring and observability. Monitoring is about gathering data and making reports on specific things that tell you how healthy your system is. It helps you spot unusual behavior or problems with your system’s state and performance, answering the “what” and “when” of an issue. Observability, on the other hand, is more like being a detective. It digs into how different parts of your distributed system talk to each other, using the data from monitoring tools to figure out why and how problems happen. Observability goes beyond regular monitoring. It adds more context, historical data, and insights into how your system’s pieces interact.

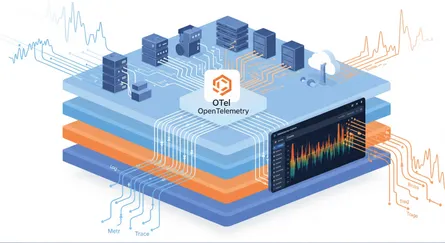

Both monitoring and observability rely on telemetry data, which usually falls into four categories:

- Metrics: These are numbers that measure system data, like how much network traffic you have or how many errors your application gets over time. Monitoring reports on these numbers, while observability tries to make them better.

- Events: These are specific actions that happen in your system at a certain time, like a user changing a password or an alert about too many failed login attempts. Events can trigger monitoring alerts and are really important when you’re investigating incidents.

- Logs: These are files generated by your software that keep a chronological record of what your system is doing, its activities, and how people are using it. Monitoring systems create these logs, and then observability tools use them for a deeper look into your system.

- Traces: These show the complete path of a single operation as it moves through different connected systems or microservices. Tracing is a key function that monitoring makes possible, and it’s fundamental to observability.

Because AWS Lambda functions are distributed, short-lived, and event-driven, traditional monitoring that focuses on servers just isn’t enough. In a serverless setup, one user request might go through many Lambda functions, API Gateways, databases, and other services. If an error pops up, the question isn’t just “which server broke?” anymore. Instead, you’re asking, “which of the hundreds or thousands of functions, in what order, talking to which services, actually caused this problem?”. Observability, especially with distributed tracing, works like a GPS for these complex systems. It maps out the whole request journey, from when it starts, through every function call and service interaction, helping you pinpoint the exact spot and root cause of a failure. This complete picture is super important for fixing problems faster (we call that reducing MTTR) in serverless, where everything depends on everything else and issues can show up far from where they started.

Here’s a table that sums up the main differences between monitoring and observability:

| Monitoring | Observability | |

|---|---|---|

| What is it? | Measuring and reporting on specific metrics within a system to ensure system health. | Collecting metrics, events, logs, and traces to enable deep investigation into health concerns across distributed systems with microservice architectures. |

| Main Focus | Collect data to identify anomalous system effects. | Investigate the root cause of anomalous system effects. |

| Systems Involved | Typically concerned with standalone systems. | Typically concerned with multiple, disparate systems. |

| Traceability | Limited to the edges of the system. | Available where signals are emitted across disparate system architectures. |

| System Error Findings | The when and what. | The why and how. |

Monitoring is a really important part of observability. Good monitoring gives you the detailed metrics, events, logs, and traces that observability then uses to dig deeper into incidents. Monitoring tells you when and what a system error is, while observability explains why and how it happened.

Navigating the Serverless Landscape: Common Monitoring Challenges

What Unique Monitoring Complexities Does Serverless Introduce?

The very things that make serverless appealing also bring their own set of monitoring challenges. Lambda functions don’t stick around long; they pop up when needed. This means traditional monitoring, which is built for long-running servers, just doesn’t work well. Functions quickly start, run their code, and then disappear, leaving behind only their data. This fast lifecycle makes it hard to capture a continuous state or persistent issues using old methods.

What’s more, breaking applications down into many small, often tiny, functions leads to something we call “function sprawl.” An application can easily have hundreds or even thousands of tiny functions running all at once. Each of these functions creates its own logs and metrics, which results in a huge and fragmented data landscape that’s tough to bring together and understand. This sheer volume and spread of components make it complicated to get a unified view of your system’s health.

Debugging becomes much more complex in serverless setups. An error that starts in one function might travel through several connected services before you even see a symptom in a completely different part of the system. Finding the real problem means tracing a request’s path across all these different parts, which is much harder than debugging one big application. Hiding the infrastructure creates an “invisible infrastructure” problem, where traditional server-focused monitoring tools just aren’t relevant. You need to shift your focus from watching static resources to understanding dynamic, event-driven processes. This makes tools that can connect different event data across a distributed network of functions and services absolutely critical.

Another challenge comes from Lambda functions being “stateless” and how that affects managing connections. Lambda containers often get recycled, meaning you can’t hold onto information at the container level. This makes it tough to keep persistent connections, like database connection pools. While it’s a good idea to use keep-alive directives to help with connection errors, the basic problem of managing resources that need to hold onto information in an environment that doesn’t hold onto information is still there.

How Do Cold Starts Impact Performance and Observability?

A “cold start” is the delay you hit when a new instance of a Lambda function starts up for the first time after being idle, or when traffic increases, or you deploy a new version. This startup process involves downloading the function’s code, setting up its environment, and running any initial code.

Cold starts directly affect performance and, in turn, how users experience your application, especially for things that need to be fast, like user-facing apps. Even a few seconds of delay can make your app feel slow and give users a bad experience. Several things influence how long a cold start takes. The programming language runtime plays a big part; for example,.NET or Java functions usually have longer cold starts compared to those written in Python, Node.js, or Go. Also, if you connect a Lambda function to a Virtual Private Cloud (VPC), it can add another 10 seconds or so of delay because it takes time to attach an Elastic Network Interface (ENI).

Observability is super important for dealing with cold starts. Watching cold start times through tools like CloudWatch Logs and AWS X-Ray is essential. It helps you find functions that are often experiencing these delays and need some tuning. While we often talk about cold starts as a performance issue, they also directly show how responsive your application is and how much it costs. Lots of cold starts mean you’re not using resources efficiently, because functions are repeatedly starting up unnecessarily. By monitoring these rates, your team can fine-tune memory allocation (which also affects how much CPU a function gets) or use strategies like Provisioned Concurrency. These actions directly lead to better responsiveness and potentially lower overall costs by cutting down on wasted startup time. This shows how observability data gives you practical insights for both performance and money savings.

Is Cost Management a Hidden Challenge in Serverless?

People often praise serverless computing for being cost-effective, especially its pay-per-use billing model. But here’s the hidden challenge: costs can shoot up fast if you’re not careful. Because you pay for every time a function runs and for the resources it uses during that run (even if there are errors), inefficient code or unexpected traffic can lead to surprisingly high bills.

A big risk in serverless environments is what we call “wallet attacks.” This is when Distributed Denial of Service (DDoS) attacks or accidental loops where functions keep calling themselves can trigger massive, uncontrolled scaling. The result? Huge and unexpected charges. While this automatic scaling is great for handling real demand, it becomes a financial weak spot when you’re dealing with bad or erroneous traffic.

Always-on observability is key for managing costs well. Watching invocation counts, how long functions run, and how much memory they use is crucial for understanding your spending patterns and finding places to save money. AWS Cost Anomaly Detection uses machine learning to keep an eye on your costs and usage, which can help you spot unusual activity, though it might have a delay of up to 24 hours. In serverless, resource consumption and cost are directly linked. This means observability tools aren’t just for technical performance; they’re also for financial control. Without clear visibility into these numbers, you’re vulnerable to sudden cost spikes from bad code, wrong settings, or malicious activity. Observability acts as a “financial safety net,” giving you the data you need to control costs, use resources wisely, and avoid going over budget. It’s a critical tool for financial operations (FinOps) in the serverless world.

AWS Native Powerhouses: CloudWatch for Lambda Monitoring

How Do CloudWatch Metrics Provide Essential Insights into Lambda Performance?

Amazon CloudWatch is the main monitoring and management service in the AWS world. It automatically gathers operational data like logs and metrics from various AWS services, including your Lambda functions. This centralized collection gives you instant, “out-of-the-box” visibility into how your functions are doing without a lot of manual setup. That’s a big plus for getting things deployed quickly and for initial checks. However, while these metrics tell you what’s happening (like lots of errors or long run times), they don’t automatically tell you why. This shows a basic gap in getting full observability: metrics are a great start for monitoring, but you need to add logs and traces for a thorough root cause analysis.

Here are some key built-in metrics CloudWatch automatically collects for Lambda functions:

| Metric Name | Description | Significance |

|---|---|---|

| Invocations | The number of times a Lambda function is called. | Shows demand and usage patterns; high counts might mean you need to scale up other services or adjust how many functions can run at once. |

| Errors | The number of times a function call resulted in an error (like unhandled exceptions, runtime problems, timeouts, or configuration issues). | Tells you about your function’s reliability and stability; super important for finding problems. |

| Duration | How long (in milliseconds) the Lambda function code runs, from start to finish. | Essential for making your function faster and managing costs, since you pay based on how long it runs. |

| Throttles | How many times Lambda rejected function calls because you hit your concurrency limits. | Points to bottlenecks in how many functions can run at once and how that might affect your application’s responsiveness. |

| ConcurrentExecutions | The number of Lambda function instances running at the same time. | Shows how many functions are running concurrently and helps you manage function scaling and limits. |

| Max Memory Used | The most memory (in MB) your function used during a single run. | Crucial for optimizing memory allocation, because giving your function more memory also gives it more CPU, which affects both performance and cost. |

Looking at the Max Memory Used field, which you can see in the REPORT entry for each function call in CloudWatch Logs, is especially helpful for optimizing memory allocation and, by extension, how much CPU a function gets. Tools like the open-source AWS Lambda Power Tuning project can help you find the best memory setup.

Unlocking Deeper Understanding with CloudWatch Logs and Logs Insights

AWS Lambda automatically captures logs for all function invocations and sends them to CloudWatch Logs, as long as your function’s execution role has the right permissions. By default, these logs go into log groups specific to each function, usually named /aws/lambda/<function-name>. While metrics give you symptoms, logs are like the detailed “crime scene” evidence of what happened during a function’s run.

CloudWatch Logs Insights is a powerful, interactive tool that helps you analyze this log data. It turns raw, often huge, log streams into data you can easily search. This lets engineers dig into specific requestIds and understand the sequence of events that led to an error or a slowdown. This ability is critical for moving beyond what happened to why and how, forming the backbone of effective serverless debugging.

Here are some tips for effective log analysis:

- Start Simple: Begin with basic queries looking for error messages, then make them more specific.

- Group by Time: Use time intervals to spot trends or issues that keep coming back.

- Filter Smartly: Narrow down your logs to focus on specific error types or serious problems. For example, a query like filter

@message LIKE /ERROR/ or @message LIKE /Task timed out/can help you find function calls with errors. - Structured Logging: It’s a good idea to use structured JSON logging, including key-value pairs like

timestamp,level,message, andAWSrequestId. This makes it much easier to filter and analyze within Logs Insights.

Here’s an example of a CloudWatch Logs Insights query to find errors:

filter @message LIKE /ERROR/ or @message LIKE /Task timed out/And if you want to dig into a specific request:

filter (@message LIKE /ERROR/ or @message LIKE /Task timed out/) and @requestId = "your-request-id"Setting Up Proactive Alerts with CloudWatch Alarms: Your First Line of Defense

CloudWatch Alarms help you catch problems early by letting you set thresholds on important Lambda metrics. When a metric goes past a certain point, an alarm can go off, acting as your first line of defense. For instance, you can set an alarm based on how long you expect a function to run to spot bottlenecks or delays.

You can connect these alarms to Amazon SNS (Simple Notification Service) topics, which then send out alerts through different ways, like email, SMS, or even integrations with chat services like Slack. This makes sure the right teams get notified quickly about critical issues.

Beyond just sending notifications, CloudWatch Alarms can also trigger other Lambda functions when an alarm state is reached. This means you can set up automatic fixes, like automatically giving more memory to a struggling function or rolling back a bad deployment. This capability helps you recover faster (that’s MTTR) and takes a load off your team, pushing serverless setups towards being more resilient and autonomous. So, alarms are a crucial link that takes you from just reacting to problems to proactively fixing them automatically.

Here’s an example of an AWS CLI command to set up a CloudWatch alarm for a high error rate:

aws cloudwatch put-metric-alarm --alarm-name "HighErrorRate" \ --metric-name Errors --namespace AWS/Lambda --statistic Sum \ --period 60 --threshold 5 --comparison-operator GreaterThanThreshold \ --dimensions Name=FunctionName,Value=your-function-name \ --evaluation-periods 1 --alarm-actions arn:aws:sns:region:account-id:your-sns-topicVisualizing Your Serverless Health with CloudWatch Dashboards

In complex serverless environments with many distributed parts, having one clear, combined view of how things are running is incredibly valuable. CloudWatch Dashboards give you customizable web-based interfaces that offer a unified overview of various metrics, logs, and alarms across your AWS resources. These dashboards are like your “control center,” letting your teams see how different pieces of data connect at a glance, spot trends, and quickly check the overall system status.

CloudWatch offers both automatic dashboards, which AWS sets up for common services like Lambda, and manual dashboards, which give you the flexibility to create custom views tailored to your specific monitoring needs. Dashboards support various types of widgets, including line charts for comparing metrics, number widgets for showing single values, log tables for raw log data, and alarm status widgets for a quick look at your alerts. The ability to share these dashboards with your team and other stakeholders helps everyone stay on the same page operationally, makes it easier to handle incidents, and builds a shared understanding of your application’s health.

Gaining Enhanced Visibility with CloudWatch Lambda Insights

CloudWatch Lambda Insights is a special feature that makes it easier to gather, see, and dig into detailed performance metrics, errors, and logs just for your Lambda functions. This service acts like a higher-level, managed monitoring agent specifically for Lambda functions. By taking away the hassle of manual setup and offering pre-curated performance metrics and visualizations, it makes it much simpler to get deep insights into performance.

When you turn it on, Lambda Insights reports eight specific metrics per function, and each function invocation sends about 1 KB of log data to CloudWatch. This detailed data helps you do deeper performance analysis. You can view metrics across many Lambda functions in a “Multi-function” view or zoom in on a single function for granular performance data, including error rates, CPU, memory, and network usage.

Lambda Insights is built on AWS Lambda Extensions, which let diagnostic tools integrate deeply into the Lambda execution environment and capture information throughout the function’s lifecycle. The service works on a pay-per-use model, meaning you only pay for the metrics and logs it reports, with no minimum fees. This makes it cost-effective for functions that get called at different rates. This shows how AWS is working to provide more integrated, “observability with almost no setup” for their serverless offerings, making advanced troubleshooting more accessible to more people.

Tracing the Journey: AWS X-Ray for Distributed Observability

How Does X-Ray Provide End-to-End Tracing for Lambda Functions?

AWS X-Ray is a service that gives you a complete picture of how requests move through your applications. It helps developers and operators understand how their Lambda functions interact with other AWS services and find performance bottlenecks. In a serverless setup, one user request can go through many Lambda functions, API Gateways, databases, and other services. Without a clear map, trying to debug something that crosses all these boundaries is super tough.

X-Ray solves this by collecting data about requests as they travel through different resources in an application. It processes this trace data to create a service map, which visually shows all the connected services and how they relate, along with searchable summaries of traces. This service map is like a GPS for your serverless microservices. It gives you a visual representation of the entire request journey and helps you pinpoint the exact location and cause of failures or slow spots. This is essential for understanding how services depend on each other and for turning chaotic distributed systems into understandable flows.

Lambda functions work seamlessly with X-Ray. Lambda runs the X-Ray daemon and automatically records segments with details about calling and running the function. X-Ray also supports tracing applications that are event-driven, like those using Amazon SQS (Simple Queue Service) and Amazon SNS (Simple Notification Service). This gives you end-to-end visibility of requests as they’re queued and processed by downstream Lambda functions, connecting traces from where they start to where they end up.

Active vs. PassThrough: Choosing the Right Tracing Strategy for Your Application

Lambda supports two different tracing modes for X-Ray: Active and PassThrough. Having these two modes means you can be smart about your observability, because not every tracing need is the same.

-

Active Tracing Mode: When you turn on

Activetracing, Lambda automatically creates trace segments for function invocations and sends them to X-Ray. If an upstream service doesn’t decide whether to sample, Lambda samples requests at a rate of one request per second plus 5% of additional requests. If an upstream service specifically says not to sample, Lambda respects that choice. This mode gives you a full picture of how your Lambda function is performing and behaving, making it especially useful for getting detailed insights for troubleshooting and making things better. To enable active tracing for your Lambda function using the AWS CLI, you can use this command:Enable Active Tracing aws lambda update-function-configuration --function-name YOUR_FUNCTION_NAME --tracing-config Mode=Active -

PassThrough Tracing Mode: In

PassThroughmode, Lambda simply passes the tracing context along to downstream services without creating its own trace segments or subsegments for the function invocation. This means that even if an incoming call includes a decision to sample the request, Lambda won’t generate traces for the function itself. This mode is valuable for saving money or when downstream services are responsible for making their own tracing decisions. It gives you more control and you know what to expect from your tracing setup, letting you optimize your tracing strategy and manage overhead.

To configurePassThroughtracing for your Lambda function using the AWS CLI, you can use this command:Enable PassThrough Tracing aws lambda update-function-configuration --function-name YOUR_FUNCTION_NAME --tracing-config Mode=PassThrough

The choice between Active and PassThrough mode is a strategic decision. You’re trying to find a good balance between seeing everything and managing costs. You can turn on tracing selectively for important parts of your application or for functions with complex dependencies, instead of just tracing everything. This way, you focus your efforts where you’ll get the most operational benefits, rather than adding unnecessary overhead or cost.

Here’s a table that sums up the main differences between AWS X-Ray tracing modes for Lambda:

| Mode | Behavior | Sampling Decision | Use Case | Pros | Cons |

|---|---|---|---|---|---|

| Active | Lambda automatically creates trace segments and sends them to X-Ray. | If no upstream decision, samples 1 request/sec + 5% further. Respects upstream “no sample” decisions. | Complete visibility into function performance and behavior. | Comprehensive insights for troubleshooting and optimization. | Higher cost due to increased trace generation. |

| PassThrough | Lambda propagates tracing context to downstream services without creating its own segments. | Respects incoming tracing context; does not create segments even if incoming request is sampled. | Cost optimization; when downstream services handle tracing decisions; propagating context without local tracing overhead. | Reduced tracing costs and overhead; greater control over where traces are generated. | Less granular visibility into the specific Lambda function’s execution. |

Integrating X-Ray with Your Lambda Functions: A Practical Guide

Turning on X-Ray tracing for Lambda functions is pretty easy. You can set up active tracing right from the Lambda console. Just go to your function’s “Configuration” tab, then “Monitoring and operations tools,” and turn on “Active tracing” under the AWS X-Ray section.

For even more detailed information within your function’s code, developers can include the X-Ray SDK with their Lambda function. The X-Ray SDK lets you instrument outgoing calls to other AWS services or external APIs, and you can also add custom notes and extra data to subsegments. This gives you really specific details about what’s happening inside your function’s execution. X-Ray SDKs are available for various runtimes, including Go, Java, Node.js, and Python.

It’s important to remember one limitation: while the X-Ray SDK lets you create subsegments and add notes and metadata to them, your function’s code can’t change the main segment that the Lambda service creates.

Adding the X-Ray SDK and custom instrumentation means there’s some extra work, which might make your deployment package a bit bigger or have a tiny impact on runtime. The best practice of only turning on X-Ray for critical functions or those with complex dependencies acknowledges this trade-off. This approach highlights that observability, while crucial, should be a smart investment. You want to focus your efforts where you’ll get the most operational benefits, rather than just tracing everything, which might add unnecessary overhead or cost.

Beyond Native: Leveraging Datadog for Comprehensive Serverless Monitoring

What Enhanced Capabilities Does Datadog Offer for AWS Lambda Observability?

While AWS’s native tools give you strong monitoring capabilities, third-party solutions like Datadog offer even better features for complete serverless observability. This is especially helpful if you’re using multiple clouds or a mix of cloud and on-premises systems. Datadog aims to give you end-to-end visibility into your serverless applications, with the goal of helping you find and fix problems faster (that’s MTTD and MTTR).

Datadog stands out because it offers a single platform that brings together and connects all your metrics, traces, and logs from every function run into one clear view. This “one place to see everything” approach makes it less complicated to manage different monitoring tools and gives you a consistent view across your whole tech stack. That’s a big advantage for large, complex organizations that might be using different cloud providers or have their own on-premises infrastructure.

The platform gives you details down to each function, helping you pinpoint exactly which resources are causing errors, high latency, or cold starts in your serverless environments. Datadog also offers smarter alerting, including real-time alerts for memory, timeout, and concurrency metrics, which helps prevent bad experiences for your end-users. Its “Serverless Warnings” feature automatically suggests ways to fix errors and performance problems, using CloudWatch metrics and Lambda REPORT log lines.

What’s more, Datadog can gather custom business metrics right from your functions. This lets you connect them with health metrics and see them on customizable dashboards. This capability helps break down data silos and gives development, operations, and business teams one unified source of truth. Datadog also makes setup simple with “observability with almost no setup” through integrations with AWS SAM, Serverless Framework, and AWS CDK.

How Does Datadog Unify Metrics, Logs, and Traces for a Holistic View?

Datadog’s way of bringing together metrics, logs, and traces for AWS Lambda involves a thorough way to collect data. Its Lambda extension gathers logs through CloudWatch, and then adds traces, better metrics, and custom metrics from the Datadog Lambda Library. This method ensures that a rich variety of telemetry data gets into the platform.

A cool feature is how Datadog automatically grabs function requests and responses for every time your function runs. This gives you crucial information about the data payload, which can really speed up troubleshooting by providing key context around why a function failed.

The real power of Datadog isn’t just in collecting these different data streams, but in smartly connecting them across your whole application. Instead of manually piecing together information from separate CloudWatch dashboards, Logs Insights queries, and X-Ray traces, Datadog’s platform automatically links these signals to a specific request or function invocation. This “intelligent correlation” significantly speeds up troubleshooting by presenting a clear story of what went wrong. You can spot critical application issues and jump to relevant traces or logs with just one click, which makes it easier for engineers to understand and helps you fix problems faster (MTTR). This unified visibility lets you fully understand an individual customer request, from when it started through all its associated logs and metrics, giving you a complete picture of your application’s health.

Mastering Serverless Observability: Best Practices for AWS Lambda

Why is a Multi-Layered Monitoring Approach Essential?

For complete observability in serverless environments, just using one tool isn’t enough. Metrics, logs, and traces each offer a unique way to look at system behavior. They provide different pieces of the puzzle that, when put together, give you the whole picture. Metrics offer aggregated numbers, answering “how many errors?” Logs give you granular event details, explaining “what happened during this specific error?” Traces map the request flow across distributed components, revealing “where did the error propagate?” No single signal tells the full story.

So, a multi-layered approach is crucial. This means using the combined strengths of AWS native tools CloudWatch for basic metrics and logs, and X-Ray for distributed tracing alongside potentially third-party solutions like Datadog for advanced, unified insights in complex or multi-cloud environments. This shift from thinking about individual tool capabilities to the combined value of different types of telemetry is fundamental to effective serverless observability. It lets you do both high-level health checks and deep root cause analysis.

How Can You Optimize Lambda Performance Through Continuous Observability?

Observability isn’t just a reactive tool for debugging; it works as a proactive “way to make things better” for serverless efficiency. By always watching performance metrics and connecting them with traces, your teams get insights from data to make small, continuous improvements.

- Adjusting Memory and CPU: Testing performance with different memory settings is crucial, because giving your function more memory also gives it more CPU. Watching the

Max Memory Usedfield in CloudWatchREPORTentries helps you fine-tune memory allocation, leading to the best performance and cost. - Setting the Right Timeout: It’s important to load test your Lambda functions to figure out the best timeout value, especially when functions make network calls to other services that might not scale as well as Lambda.

- Dealing with Cold Starts: Observability data helps you come up with strategies to reduce cold starts. This includes using Provisioned Concurrency to keep function instances warm choosing efficient runtimes like Node.js, Python, or Go and using community solutions like Lambda Warmer.

- Finding Bottlenecks: AWS X-Ray is incredibly valuable for tracing request flows to find performance bottlenecks, like slow parts of your code, inefficient database queries, or slow external API calls. Analyzing complete traces helps you target your optimizations.

- Smart Caching: Using caching can significantly cut down execution times and make things faster. This includes using Lambda Layers to store dependencies across functions, and application-level caching with services like Amazon ElastiCache for data you often request.

- Reusing the Execution Environment: Initializing SDK clients and database connections outside your function’s main handler and caching static assets locally in the

/tmpdirectory can make things faster and reduce runtime costs. This is because subsequent function calls can reuse these resources.

Here’s an example of how you might optimize a slow database call by using batch queries instead of single queries:

# Before optimization: Single database query per request# results = db.query("SELECT * FROM users WHERE id = %s", user_id)

# After optimization: Use batch queriesresults = db.query("SELECT * FROM users WHERE id IN (%s)", batch_of_user_ids)And here’s a simple example of using Amazon ElastiCache for caching in a Lambda function:

import redis

redis_client = redis.StrictRedis(host='my-redis-cluster', port=6379, db=0)

def lambda_handler(event, context): cache_key = "some-key" cached_value = redis_client.get(cache_key)

if cached_value: return cached_value else: # Fetch from the source, then cache the result result = fetch_from_database() # Assume this function fetches data redis_client.set(cache_key, result) return resultEnsuring Cost Efficiency with Smart Monitoring Strategies

In a serverless environment, every function call and resource used directly adds to your cost. This creates a direct “financial feedback loop,” where bad code or unoptimized settings immediately hit your budget. Observability gives you the data you need to spot these inefficiencies and potential “wallet attacks”.

- Always Watching Your Usage: Closely monitoring invocation counts, how long functions run, and memory usage is critical for understanding your spending patterns and finding places to save money.

- Setting Resource Limits: Setting Lambda Concurrent Execution Limits and appropriate function timeouts is essential to prevent unexpectedly huge bills from traffic spikes or recursive calls.

- Cleaning Up Unused Functions: Regularly deleting Lambda functions you’re no longer using stops them from needlessly counting against deployment package size limits and costing you money.

- Spotting Unusual Costs: Using services like AWS Cost Anomaly Detection, which uses machine learning to constantly watch costs and usage, can help you spot unusual activity in your account, cutting down on false alerts.

By using observability data, your teams can proactively make functions better, set limits, and spot unusual activity. This turns observability into a critical financial management tool that directly helps you save money and predict costs.

Integrating Observability into Your Development Lifecycle: Shift Left!

Making monitoring and observability a part of your development process from the start, not something you think about later, is crucial for sustainable serverless development. This “shift left” approach makes sure your applications are inherently “built to be observable,” making them easier to debug, optimize, and secure once they’re deployed.

-

Setting Up Monitoring Early: Developers should include custom CloudWatch metrics and turn on X-Ray traces when they create new Lambda functions. This early setup ensures you capture the necessary data from the very beginning. Here’s an example of how you might add a custom metric in your Python Lambda function:

Adding Custom Metrics in Python import boto3cloudwatch = boto3.client('cloudwatch')def lambda_handler(event, context):# Custom metric: User signupscloudwatch.put_metric_data(Namespace='MyApp',MetricData=)# Continue with the rest of the Lambda function logicreturn {'statusCode': 200,'body': 'Function executed successfully!'} -

Checking and Adjusting Regularly: You should regularly review and update your monitoring configurations, including CloudWatch Log retention periods, alert thresholds, custom metrics, and X-Ray sampling rates, as your application changes.

-

Automatic Error Handling: Setting up strong error handling, like using dead-letter queues to catch failed asynchronous calls and adding custom retry logic for synchronous calls, is vital for resilience.

-

Making Functions Idempotent: Writing Lambda functions that produce the same result no matter how many times they’re called with the same input is a key part of building resilient distributed systems. It helps them gracefully handle duplicate events.

-

Don’t Let Functions Call Themselves Recursively: You should design functions to avoid calling themselves or processes that could lead to accidental recursive calls. This can result in huge numbers of function calls and increased costs.

This proactive approach cuts down on the significant cost and complexity of trying to add observability later in the process. It also helps developers take direct responsibility for the operational health and performance of their serverless functions from day one, leading to more sustainable and resilient serverless applications.

Frequently Asked Questions (FAQs) about AWS Lambda Monitoring

To effectively monitor cold starts, you’ll want to use CloudWatch Logs and AWS X-Ray. These services help you find functions that are experiencing long cold start times, especially those that aren’t called very often. For critical functions that need to be fast, using Provisioned Concurrency is a smart way to keep instances warm and reduce those startup delays. Also, choosing runtimes like Node.js, Python, or Go can help, as they generally have shorter cold start durations. For functions that aren’t as critical, you might look into community solutions like Lambda Warmer to help keep them ready.

Here’s an example AWS CLI command to enable Provisioned Concurrency for a Lambda function:

aws lambda put-provisioned-concurrency-config \ --function-name my-function \ --provisioned-concurrent-executions 5 \ --qualifier $LATESTCutting down on Lambda monitoring costs involves optimizing how your functions use resources and being smart about your monitoring tools. Make sure your function’s duration and memory allocation are optimized, because these directly affect your bill. Delete any Lambda functions you’re no longer using so they don’t needlessly count against your deployment limits. Set appropriate Lambda Concurrent Execution Limits and timeout values to prevent unexpectedly huge bills from unexpected traffic or recursive loops. For AWS X-Ray, think about using PassThrough tracing mode when full end-to-end visibility isn’t absolutely critical, as this reduces how many traces are generated and their associated costs. Be aware of the costs for services like CloudWatch Lambda Insights, which charges based on usage, meaning you only pay for the metrics and logs it reports.

A multi-layered approach is generally the way to go for complete serverless observability. AWS’s native tools, like CloudWatch (for metrics, logs, and alarms) and X-Ray (for distributed tracing), give you foundational, deeply integrated, and detailed observability capabilities. Third-party solutions like Datadog offer a unified platform, advanced features like automated warnings and connecting business metrics, and crucial cross-cloud visibility for hybrid or multi-cloud environments. The best choice really depends on how complex your application is, your budget, the tools you’re already using, and your team’s expertise. Often, using a mix of native and third-party tools gives you the most complete and actionable view of your system’s health.

To set up alerts for critical Lambda errors, go to CloudWatch and create an alarm for your Lambda function’s ‘Errors’ metric. Configure the alarm to go off when the error count goes above a certain threshold over a specific time. Link this alarm to an Amazon SNS topic to send notifications via email, SMS, or integrated chat services. For more advanced alerting, use metric filters in CloudWatch Logs to spot specific error patterns within your function logs. You might even consider automating responses to these alarms by triggering another Lambda function to perform self-remediation actions.

The most important metrics to track for Lambda functions give you critical insights into performance, reliability, and cost. These include:

- Invocations: This tells you how often the function is called.

- Errors: This shows the number of failed function calls, including code and runtime errors.

- Duration: This measures how long the function runs, which is directly tied to performance and cost.

- Throttles: This indicates when you’re hitting concurrency limits, pointing to potential bottlenecks.

- ConcurrentExecutions: This reflects how many functions are running at the same time, showing concurrent usage levels.

- Max Memory Used: This helps you optimize memory allocation, which affects CPU availability and cost.

- Cold Start Times: (You get this from logs/traces) This is essential for understanding how initialization delays affect user experience.

Conclusion: Building Resilient and Optimized Serverless Applications

Complete observability isn’t just helpful; it’s absolutely essential for serverless applications to succeed. That’s because they’re inherently distributed, short-lived, and event-driven. While hiding the infrastructure makes things simpler operationally, it also makes it harder to understand how your application behaves across all its many interconnected parts. This means we need to move away from traditional server-focused monitoring to a more holistic, investigative approach that includes metrics, logs, and traces.

AWS’s native tools, like CloudWatch, give you the basic capabilities for collecting metrics and logs. They let you set up proactive alerts and see everything in one place through dashboards and special insights like Lambda Insights. AWS X-Ray works with this by offering powerful distributed tracing, which is crucial for navigating complex microservice interactions and finding performance bottlenecks across different services. Beyond what AWS offers, third-party solutions like Datadog provide a unified layer that brings together different telemetry data from various sources into one view. This is especially useful for multi-cloud or hybrid environments.

Constantly analyzing observability data acts like a powerful “way to make things better” for serverless efficiency. It lets you continuously fine-tune memory allocation, code efficiency, and strategically use features like provisioned concurrency to boost performance and reduce cold starts. What’s more, observability acts as a critical “financial safety net.” It gives you the data you need to watch your usage, control costs, and spot unusual activity, directly helping you save money and predict your spending.

Ultimately, strong serverless observability empowers teams to build applications that are more resilient, cost-efficient, and perform better. By making observability a part of your development process early on, using a multi-layered monitoring approach, and continuously using data to make things better (both performance and cost-wise), organizations can truly unlock the full potential of serverless. This turns operational challenges into chances for innovation and efficiency.

References

- 5 Serverless Challenges of DevOps Teams and How to Overcome …, accessed on June 7, 2025, https://devops.com/5-serverless-challenges-of-devops-teams-and-how-to-overcome-them/

- AWS Lambda: Serverless Computing Explained - AWS, accessed on June 7, 2025, https://aws.amazon.com/awstv/watch/64bb50cff05/

- aws.amazon.com, accessed on June 7, 2025, https://aws.amazon.com/awstv/watch/64bb50cff05/#:~:text=AWS%20Lambda%2C%20a%20serverless%20compute,into%20a%20modern%20production%20application.

- Serverless Computing – Amazon Web Services - AWS, accessed on June 7, 2025, https://aws.amazon.com/serverless/

- Observability vs Monitoring - Difference Between Data-Based … - AWS, accessed on June 7, 2025, https://aws.amazon.com/compare/the-difference-between-monitoring-and-observability/

- aws.amazon.com, accessed on June 7, 2025, https://aws.amazon.com/compare/the-difference-between-monitoring-and-observability/#:~:text=With%20monitoring%20systems%2C%20you%20can,between%20hundreds%20of%20service%20components.

- Ask the Expert: AWS serverless challenges and how to overcome …, accessed on June 7, 2025, https://www.jeffersonfrank.com/insights/aws-serverless-challenges-and-tips/

- Streamlining trace sampling behavior for AWS Lambda functions …, accessed on June 7, 2025, https://aws.amazon.com/blogs/compute/streamlining-trace-sampling-behavior-for-aws-lambda-functions-with-aws-x-ray/

- Visualize Lambda function invocations using AWS X-Ray, accessed on June 7, 2025, https://docs.aws.amazon.com/lambda/latest/dg/services-xray.html

- AWS Lambda and AWS X-Ray - AWS Documentation, accessed on June 7, 2025, https://docs.aws.amazon.com/xray/latest/devguide/xray-services-lambda.html

- Best practices for working with AWS Lambda functions, accessed on June 7, 2025, https://docs.aws.amazon.com/lambda/latest/dg/best-practices.html

- Essential Guide to AWS Lambda Monitoring - Best Practices | SigNoz, accessed on June 7, 2025, https://signoz.io/guides/aws-lambda-monitoring/

- Improve Your AWS Monitoring with CloudWatch Dashboards, accessed on June 7, 2025, https://awsfundamentals.com/blog/improve-your-aws-monitoring-with-cloudwatch-dashboards

- Monitoring AWS Lambda errors using Amazon CloudWatch | AWS …, accessed on June 7, 2025, https://aws.amazon.com/blogs/mt/monitoring-aws-lambda-errors-using-amazon-cloudwatch/

- Sending Lambda function logs to CloudWatch Logs - AWS Documentation, accessed on June 7, 2025, https://docs.aws.amazon.com/lambda/latest/dg/monitoring-cloudwatchlogs.html

- Amazon CloudWatch Documentation, accessed on June 7, 2025, https://www.amazonaws.cn/en/documentation-overview/Amazon-CloudWatch/

- Lambda Error Monitoring: Best Practices - AWS for Engineers, accessed on June 7, 2025, https://awsforengineers.com/blog/lambda-error-monitoring-best-practices/

- Log and monitor Java Lambda functions - AWS Documentation, accessed on June 7, 2025, https://docs.aws.amazon.com/lambda/latest/dg/java-logging.html

- Monitoring Lambda applications - AWS Documentation, accessed on June 7, 2025, https://docs.aws.amazon.com/lambda/latest/dg/applications-console-monitoring.html

- Instrumenting Java code in AWS Lambda, accessed on June 7, 2025, https://docs.aws.amazon.com/lambda/latest/dg/java-tracing.html

- Complete Serverless Observability | Datadog, accessed on June 7, 2025, https://www.datadoghq.com/product/serverless-monitoring/

- Serverless Monitoring for AWS Lambda - Datadog Docs, accessed on June 7, 2025, https://docs.datadoghq.com/serverless/aws_lambda/

- Serverless Warnings - Datadog Docs, accessed on June 7, 2025, https://docs.datadoghq.com/serverless/guide/serverless_warnings/

- AWS Lambda - Datadog Docs, accessed on June 7, 2025, https://docs.datadoghq.com/integrations/amazon_lambda/